Can anything unsafe execute without AISFY?

AI Governance by Design for Real-World Execution

AISFY is an AI firewall that sits between any AI system and real-world execution — blocking unsafe actions and allowing only those that meet regulatory, business, and accountability rules.

Whether content is created by AI, agencies, or humans, AISFY ensures nothing goes live without the right safeguards in place.

Access is granted selectively for regulated environments.

Ready to Aisfy?

Answer a few questions to get a customized Aisfy App for your needs.

The Problem Isn’t AI — It’s Uncontrolled Execution

AI is already being used across marketing, content, automation, and agentic workflows.

The risk doesn’t come from using AI. It comes from letting AI act without structure, visibility, or accountability.

In regulated industries like healthcare:

Most teams discover these risks after execution — when it’s too late. AISFY exists to prevent that before execution occurs.

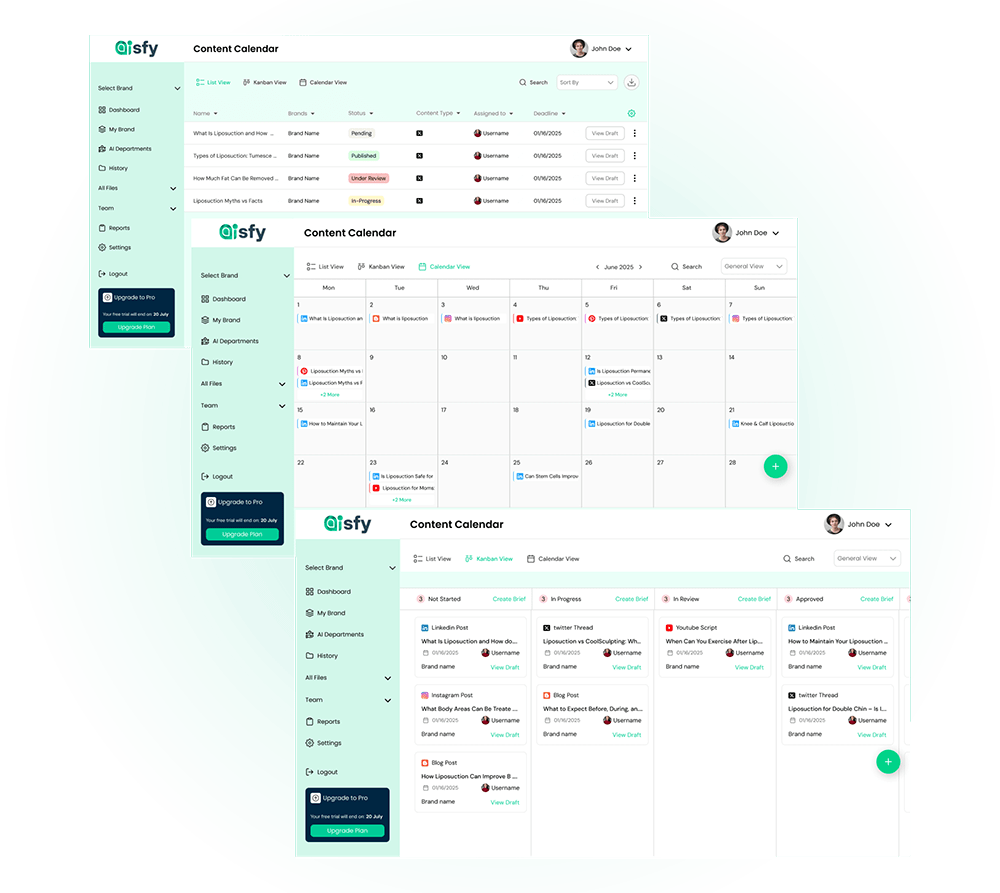

See what AI actions would be allowed — and what would be blocked — before execution.

Governance by Design — Not After the Fact

AISFY implements governance by design.

That means:

Nothing is blocked without warning.

Nothing is allowed without meeting clear rules.

If an action will be blocked, you know before you try to publish.

No CTA here (correct — this is conviction-building).

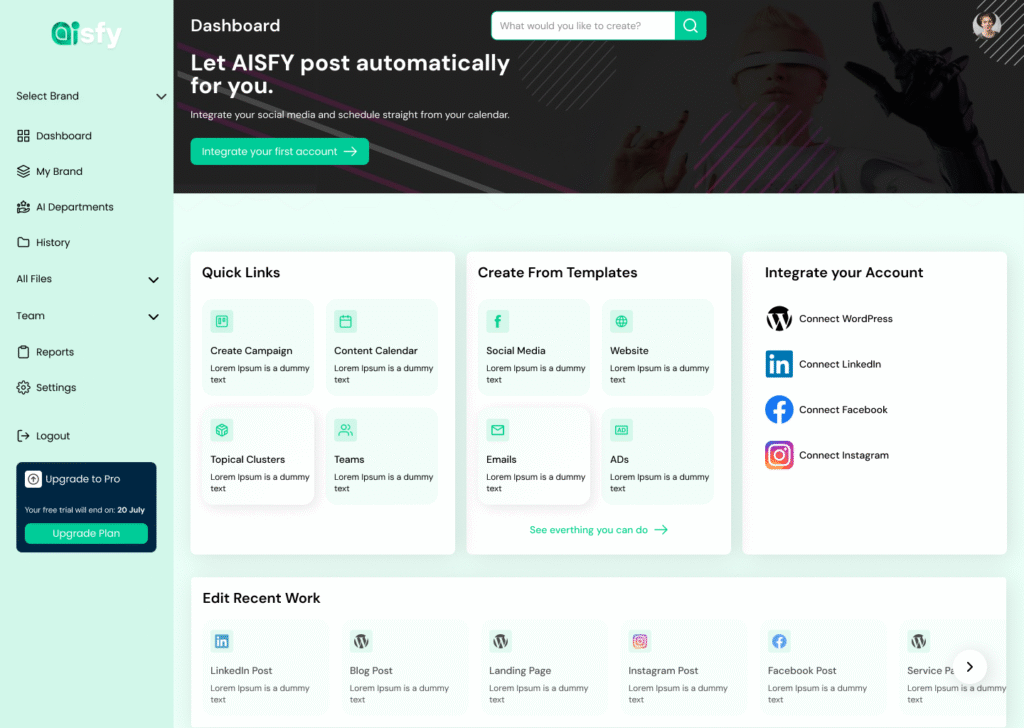

How Aisfy Works

AISFY doesn’t replace your tools.

It governs how they execute.

What AISFY Controls — and What It Doesn’t

AISFY Controls:

AISFY Does Not:

AISFY decides yes or no — nothing more, nothing less.

No CTA (correct).

WHY TEAMS TRUST AISFY

AI adoption is inevitable.

Uncontrolled execution is not sustainable.

In the same way:

AI execution control will become mandatory for organizations.

AISFY is built for that future.

Once installed, AISFY becomes the final checkpoint before action.

Removing it means returning to unmanaged risk.

Where practitioners, regulators, and builders discuss AI execution safety.

AISFY is designed for organizations where:

The Vision

To build a world where

AI can move fast

Humans stay accountable

Regulation doesn’t slow innovation

The control point stays the same.

Why AISFY Is Access-Controlled

AI governance cannot be understood through screenshots or feature lists.

Access is granted so teams can see:

How execution is evaluated

Enterprises deploying AI across workflows

How enforcement decisions are made

Regulated organizations under compliance pressure

What gets blocked — and why

Teams that need speed without exposure

How accountability is recorded

Founders and operators who want AI aligned with responsibility

AISFY is not self-serve because execution control must be understood correctly.

Access is reviewed. Not all requests are accepted.

AISFY is an AI firewall that enforces governance by design

ensuring AI can only act when it is safe, compliant, and accountable.

Aisfy is your trusted AI execution control layer